This is the third post in this series; the first two, which set the background for the issue, are available here and here.

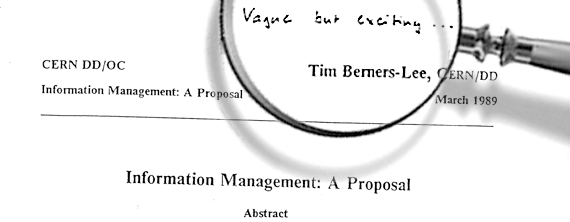

The question therefore becomes – is it time we look beyond the ‘internet’ as it exists, to newer models of communication? The ‘models’ I refer to here are not absolutely novel – nothing under the sun is. These models still rely on the TCP/IP protocol, still use parts of the ‘internet’, still use the network laid down for it – learn from it, and improve it. These models, in fact, bring to mind the original image that was created of the internet, so much so that we can actually call these models of communication the legacies of the ideas of the ‘original internet’, challenging the dominance of the ‘neo-internet’. So is it time we focus on these models, develop them, and mark the decline of the ‘neo-internet’?